|

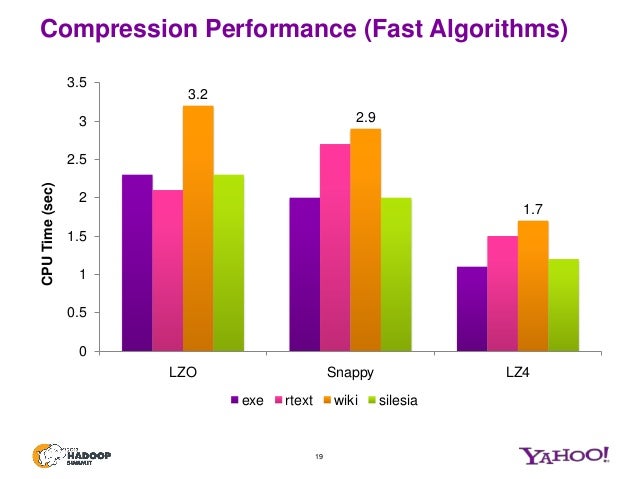

6/5/2023 0 Comments Snappy compression One practical example is distributed queues like Kafka that have to decompress/recompress data on the fly, adapting to whatever formats the many clients can send/receive.Īnother major use case is databases, where data can be compressed before being stored on disk. The answer is yes, because the effect is noticeable and on-the-wire compression should keep up with the hardware it's running on, including NVMe SSD (750+ MB/s) and local network (1.25+ GB/s).įor client-server applications where the server will be receiving and decompressing many streams from many clients, the cost of decompression can add up real quick. "faster is better" of course, yet, you may be tempted to ask if it really matters at these kind of speed? Do we care to do 1 GB/s or 2 GB/s per core? It would be great if all software supporting LZ4 should would expose the compression level as a setting, but not all do. In addition, LZ4 is tunable, the compression level can be finely tuned from 1 to 16, which allows to have stronger compression if you have CPU to spare. LZ4 was constructed to fully take advantage of that property and saturate CPU/memory bandwidth. LZ algorithms are generally extremely fast at decompression (they can operate in constant time), that's one of the reasons they are popular. In particular when it comes to decompression speed, LZ4 is multiple times faster. While they are both extremely fast, LZ4 is (slightly) faster and stronger, hence it should be preferred. They are both compressing at a similar speed and a similar compression ratio (except decompression speed where LZ4 is much faster).įor historical reference there is a third algorithm called LZO that plays in the same league, it's much older (paper from 1996) and not widely used. They are both widely usable now and have good libraries available (I am writing this in 2021), however that wasn't the case a few years ago.

It takes a good decade for newer technologies to gain adoption and for optimized stable libraries to emerge in all popular languages. Compress data to reduce IO, it's transparent since the compression algorithm is so fast -faster than reading/writing from the medium-.īoth algorithms appeared in early 2010s and can be considered relatively recent. The main use case is to apply compression before writing data to disk or to network (that usually operate nowhere near GB/s). They are both algorithms that are designed to operate at "wire" speed (order of 1 GB/s per core) when compressing and decompressing. First, let's discuss what they have in common

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed